Nash equilibrium: the strategy nobody can profitably deviate from

What “no profitable deviation” actually means

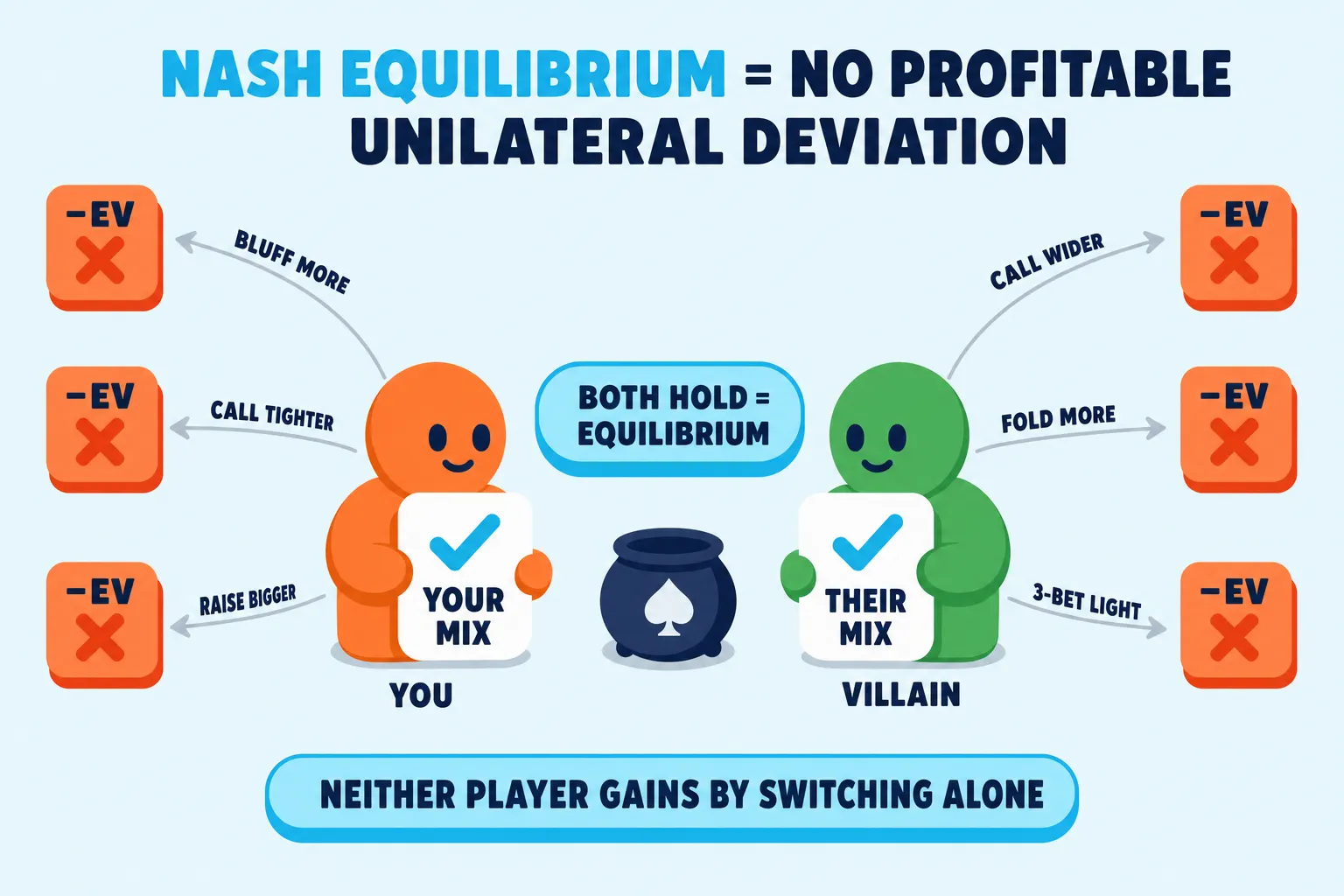

A Nash equilibrium is a set of strategies, one for each player, where nobody can improve their expected value by changing their own strategy alone. If you switch (call instead of raise, bluff a different fraction of the time, fold a hand you used to call), your EV does not go up. Neither does theirs if they make the same kind of unilateral switch. Everyone is already doing the best they can given what everyone else is doing. In poker books and solver output, “GTO” is shorthand for this exact idea.

The word “unilateral” is the load-bearing one. It means one player changes, and only that player changes. If you both update at once, the equilibrium can move. That’s why the concept is paired with the assumption that opponents will adjust correctly. An equilibrium strategy is the answer to the question: what should I play if my opponent already knows my strategy and is doing the best thing against it?

A useful mental shortcut:

- Equilibrium = play in a way that has no leak to attack, even if villain sees your whole strategy.

- Exploit = find their leak and take it, accepting that you now have a leak too.

In most poker writing, “Nash equilibrium” and “GTO” are used interchangeably for this baseline.

Related terms

Nash equilibrium vs GTO vs solver output

These three terms get used as synonyms, which is mostly fine, but they aren’t identical. The difference matters when somebody says “the solver said this is GTO so it must be Nash.”

| Term | What it is | What it isn’t |

|---|---|---|

| Nash equilibrium | The exact mathematical idea: a strategy profile where no player can improve by unilateral change. | A specific recipe. There can be multiple Nash equilibria for the same game. |

| GTO | The poker-room name for “playing a Nash equilibrium of the spot you’re in.” | Always the highest-EV play. Against bad players, an exploit usually wins more. |

| Solver output | A computed approximation of a Nash equilibrium under a fixed range, bet-size tree, and abstraction. | Proof of true equilibrium. The full game of NLHE has not been solved. |

Solvers report something called Nash distance or exploitability, often as a percent of the pot or in bb per 100 hands. A small number means the solver’s solution is close to a true equilibrium; zero would mean exact. In practice, you stop the solver when the number is small enough that no human could exploit the gap. That output gets called “GTO” in shorthand, but it is technically an ε-Nash (“epsilon-Nash” or approximate-Nash) strategy: close to equilibrium, not provably at it.

The cleanest poker game where Nash is exactly known is short-stack heads-up push-or-fold. The action set is so small (shove or fold) that calculators can produce the equilibrium ranges directly. That’s what the Nash push/fold charts you see for sub-10bb tournament play actually are.

When equilibrium thinking matters most

Equilibrium logic earns its keep when reads are weak, opponents are tough, or the cost of being wrong is high.

- Against tough regulars. If they’re already balanced, a mistimed exploit just hands them EV.

- Against unknowns. No reads, no stats. The equilibrium baseline is the safe default until information arrives.

- In multi-tabling online play. You can’t read twelve tables at once. An equilibrium-anchored game scales without per-opponent attention.

- In heads-up subgames. Once a 6-max hand narrows to two players, Nash logic is mathematically airtight. Same for once everyone but two has folded preflop.

- As a study tool. Even players who deviate constantly at the table use solvers to learn what the equilibrium baseline looks like, so they know when their exploits are safe and when they’ve overcorrected.

It matters less when:

- The table is full of recreational players with obvious one-way leaks (calling stations, never-3-bettors, blind-defenders who fold too much).

- The pot is multi-way. Nash equilibrium still exists in 3+ player spots, but the math gets messier: a single fish’s mistake doesn’t redistribute EV evenly across the other players, and your equilibrium play can lose EV that another player at the table captures.

- You have a strong, repeatedly-confirmed read and the opponent isn’t going to counter-adjust before the night ends.

- The format is loose enough that opportunity cost from playing a tight equilibrium baseline is real (large-field MTT day 1, soft live cash).

Worked example: river bluff at equilibrium

The cleanest place to see Nash equilibrium in poker is a polarized river spot: the bettor has either the nuts or air, the caller has a bluff-catcher.

Pot is $100. Hero’s range on the river is half nuts, half air. Hero bets pot ($100). The opponent has a bluff-catcher that beats Hero’s air and loses to Hero’s value.

What bluff frequency makes Hero’s range balanced (an equilibrium)?

The math: the bluff-catcher’s call has to be break-even. Set call EV to zero, where f is the share of Hero’s bet range that’s a bluff:

EV(call) = f × ($100 + $100) − (1 − f) × $100 = 0Solving gives f = 1/3. Two value bets for every one bluff at pot-sized. That’s the bluff-to-value ratio you’ve probably seen quoted: 2:1 at pot.

The mirror question, what call frequency makes Hero’s bluffs break-even, gives the bluff-catcher’s defense. The math is pot / (pot + bet) = 100 / 200 = 50%. The opponent has to call exactly half the time to make Hero’s air-hand bluffs zero-EV.

Now check the equilibrium property. If the opponent calls more than 50%, Hero’s bluffs lose money, so Hero deviates by bluffing less. If they call less than 50%, Hero deviates by bluffing more. If Hero bluffs more than one-third, the opponent deviates by calling everything (because every call now wins). If Hero bluffs less, the opponent deviates by folding everything. The 1/3 bluff-fraction paired with the 50% call-frequency is the only pair where neither side can profitably switch alone. That pair is the Nash equilibrium of this spot.

Real river spots are messier (multiple bet sizes, draws that didn’t get there, blocker effects, range overlap). The polarized abstraction strips it to the bones, which is why every textbook teaches it first.

Common mistakes

1) “Nash means the highest-EV play”

It doesn’t. Nash equilibrium gives you a baseline: the strategy that cannot be profitably attacked by a perfectly adapting opponent in a heads-up zero-sum spot. Against a calling station who never folds rivers, an exploitative strategy that bluffs less and value-bets thinner wins more chips per hand than the equilibrium baseline. Equilibrium is the unexploitable answer; the highest-EV answer changes with the opponent.

2) “Nash equilibrium is one fixed play”

Equilibrium is usually a frequency, not a single action. The whole point of mixed strategy is that some hands get played multiple ways at equilibrium because different actions have the same expected value, leaving the player indifferent. “Always 3-bet AKs” is a pure strategy; “3-bet AKs 70% and call 30%” is a mixed one. Solvers report mixed frequencies for marginal hands constantly. Treating equilibrium as a single chart of “do this with this hand” misses how it actually works.

3) “Poker is solved”

Heads-Up Limit Hold’em was solved in 2015. Heads-Up No-Limit Hold’em was beaten by an AI (Libratus, 2017) but not solved; the game tree has roughly 10^160 decision points, more than atoms in the universe. Six-max cash, full-ring cash, and tournament play are nowhere near solved. What solvers produce is an ε-Nash equilibrium of a specific abstracted spot: fixed ranges, fixed bet sizes, simplified game tree. “The solver said so” is a useful starting point and not a final answer.

4) “Nash equilibrium guarantees you can’t lose money”

In a heads-up zero-sum game, it guarantees you cannot be exploited below the game’s equilibrium value. That value can still include forced costs from blinds or position; the point is that a unilateral opponent cannot push you lower. In multi-way pots, the guarantee is weaker. A fish’s mistake at a 6-max table can give one of the other players a much bigger EV bump than you, while costing you a smaller amount of EV even if you stick to equilibrium. Once a third player is involved, equilibrium thinking is still useful, but “you’re safe” is no longer the right framing.

FAQ

Is GTO the same as Nash equilibrium in poker?

Effectively yes, with caveats. In every poker book using these terms, “GTO” is treated as the poker-table name for “play a Nash equilibrium of the modeled spot.” The caveat is that real solver output is an approximation: it computes an ε-Nash equilibrium under a specific abstraction (a fixed range, a fixed bet-size tree, a simplified game). Calling that “GTO” is shorthand. The underlying math is Nash equilibrium; the practical artifact is an approximation of it.

Why do solvers mix some hands and not others?

Because of the indifference principle: a hand only gets played multiple ways at equilibrium when the available actions have exactly the same EV, leaving the player indifferent between them. Most hands have one clearly higher-EV action and the solver picks it 100%. The hands you see split (50/50 bet/check, 70/30 raise/call) are the marginal ones whose EV is balanced on a knife edge by the opponent’s response. If the opponent’s strategy shifts even slightly, the indifferent hands collapse to a single best action.

Should I just memorize equilibrium charts and play them?

Not exactly. Memorizing solver output without understanding why a hand mixes will rot in your memory the first time the spot looks slightly different (different stack depth, different opening sizing, different opponent type). The point of studying Nash equilibrium isn’t to mechanize the table; it’s to learn what the unexploitable baseline looks like so you can see how far the table is from it, and where deviating wins more EV than playing the floor. Most strong players use equilibrium ideas to build pattern recognition first, then deviate based on opponent reads and population tendencies.